www.ptreview.co.uk

19

'26

Written on Modified on

Open Data Architecture for Physical AI Systems

NVIDIA, Microsoft Azure and Nebius collaborate to deploy a scalable data factory framework for robotics, vision AI agents and autonomous vehicle development.

www.nvidia.com

NVIDIA, in collaboration with Microsoft Azure and Nebius, has introduced an open reference architecture designed to industrialize data generation and processing for physical AI systems, including robotics and autonomous mobility applications.

Context of the Cooperation

The cooperation brings together NVIDIA, Microsoft Azure and Nebius to address a core constraint in physical AI development: the availability of large-scale, high-quality training data.

Physical AI systems such as autonomous vehicles, robotic platforms and vision AI agents require diverse datasets that include rare and safety-critical scenarios. Capturing such data in real-world environments is resource-intensive and often impractical. The cooperation aims to replace manual data collection pipelines with automated, compute-driven data generation workflows.

Technical Solution and Responsibilities

The solution is based on the NVIDIA Physical AI Data Factory Blueprint, an open architecture that structures data pipelines into modular stages: curation, augmentation and evaluation.

- NVIDIA provides the core software stack, including Cosmos-based tools for dataset processing, synthetic data generation and validation. These components enable transformation of limited datasets into expanded training corpora using simulation and generative models.

- Microsoft Azure integrates the blueprint into its cloud-based digital infrastructure, combining services such as IoT operations, data platforms and real-time analytics to support enterprise-scale machine learning workflows.

- Nebius delivers infrastructure-level integration, combining GPU-accelerated computing (RTX PRO 6000 Blackwell class systems), object storage and serverless execution for end-to-end pipeline deployment.

The architecture operates through automated workflows that refine raw datasets, generate synthetic variations (including long-tail scenarios), and validate outputs using reasoning-based evaluation models. This reduces manual intervention and standardizes dataset quality.

An orchestration layer, NVIDIA OSMO, manages distributed workloads across compute environments. It integrates with AI coding agents to automate resource allocation and pipeline execution, supporting scalable industrial automation of data workflows.

Deployment and Implementation

The blueprint is deployed across cloud environments, allowing developers to configure data pipelines without building dedicated infrastructure.

On Azure, the system is implemented as part of an open physical AI toolchain, enabling integration with enterprise IT systems. Nebius deploys the architecture within its AI cloud, supporting production-grade pipelines with managed inference and data services.

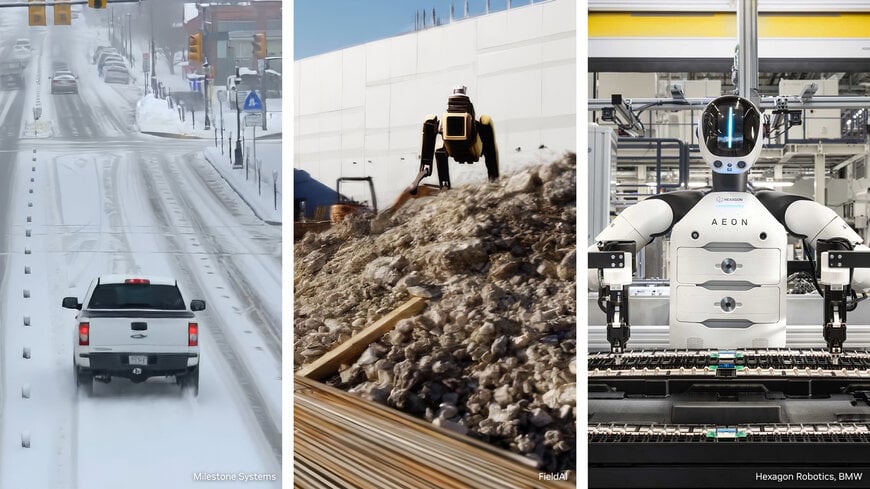

Early adopters including FieldAI, Hexagon Robotics, Linker Vision and Teradyne Robotics are testing the system in perception, mobility and reinforcement learning workflows. Additional implementations on Nebius infrastructure support video analytics and robotic applications.

Applications and Use Cases

The architecture targets multiple industrial domains:

An orchestration layer, NVIDIA OSMO, manages distributed workloads across compute environments. It integrates with AI coding agents to automate resource allocation and pipeline execution, supporting scalable industrial automation of data workflows.

Deployment and Implementation

The blueprint is deployed across cloud environments, allowing developers to configure data pipelines without building dedicated infrastructure.

On Azure, the system is implemented as part of an open physical AI toolchain, enabling integration with enterprise IT systems. Nebius deploys the architecture within its AI cloud, supporting production-grade pipelines with managed inference and data services.

Early adopters including FieldAI, Hexagon Robotics, Linker Vision and Teradyne Robotics are testing the system in perception, mobility and reinforcement learning workflows. Additional implementations on Nebius infrastructure support video analytics and robotic applications.

Applications and Use Cases

The architecture targets multiple industrial domains:

- Autonomous driving systems requiring edge-case scenario training

- Industrial and service robotics using reinforcement learning

- Vision AI agents for surveillance and analytics

Use cases include simulation-based training, validation of perception models under variable conditions, and continuous dataset expansion for adaptive systems.

Results and Expected Impact

The cooperation introduces a shift from data collection to data generation, where compute resources are used to systematically produce training datasets. This approach reduces dependency on real-world data acquisition while improving coverage of rare scenarios.

Operationally, the framework enhances scalability, repeatability and validation of AI training pipelines. By integrating cloud-based digital infrastructure with automated workflows, the system supports faster iteration cycles and more consistent model performance across complex environments.

Edited by an industrial journalist, Sucithra Mani, with AI assistance.

www.nvidia.com

Results and Expected Impact

The cooperation introduces a shift from data collection to data generation, where compute resources are used to systematically produce training datasets. This approach reduces dependency on real-world data acquisition while improving coverage of rare scenarios.

Operationally, the framework enhances scalability, repeatability and validation of AI training pipelines. By integrating cloud-based digital infrastructure with automated workflows, the system supports faster iteration cycles and more consistent model performance across complex environments.

Edited by an industrial journalist, Sucithra Mani, with AI assistance.

www.nvidia.com